by simon | Aug 21, 2018 | Digital Insights

Widespread artificial intelligence, biohacking, new platforms and immersive experiences dominate this year’s Gartner Hype Cycle.

Waiting curbside for an Uber or Lyft driver might one day be the old-fashioned way of getting around. Instead, passengers will need to head to the helipad to catch a ride from a flying autonomous vehicle. Not only will these future taxis take to the sky to potentially reduce traffic, they’ll operate independently of a human pilot.

Realistically, the world (and the technology) aren’t quite ready for autonomous flying taxis. The first challenge will be mastering the autonomous technology, which is still at least five to 10 years away. According to a recent test by the Insurance Institute for Highway Safety, today’s autonomous vehicle makers Level 2 driver assistance are not capable of driving safely on their own. The production vehicle that can safely drive itself anywhere, anytime isn’t available at the local car dealer and won’t be for quite some time.

The concept of autonomous flying vehicles isn’t just for human passengers but can be applied to transport many other things such as medical supplies, packages, food delivery and more. Companies are actively investigating this technology as a way to deliver same-day packages or regularly send supplies to remote locations without a pilot. These are a real possibility in the next decade.

Fully autonomous flying vehicles are an easier problem to solve in some cases than autonomous vehicles on the ground because the airspace is highly controlled and there are fewer variables such as humans. However, while there will be unique regulatory and societal challenges (e.g., where would all the helipads go, and how do we prevent crashes?), flying autonomous vehicles are one of 17 new technologies to join the Gartner Hype Cycle for Emerging Technologies, 2018. The Gartner Hype Cycle focuses on technologies that will deliver a high degree of competitive advantage over the next decade.

This year, Gartner organized the 17 technologies into five major trends: Democratized artificial intelligence (AI), digitalized ecosystems, do-it-yourself biohacking, transparently immersive experiences and ubiquitous infrastructure.

“As a technology leader, you will continue to be faced with rapidly accelerating technology innovations that will profoundly impact the way you deal with your workforce, customers and partners. The trends exposed by these emerging technologies are poised to be the next most impactful technologies that have the potential to disrupt your business, and must be actively monitored by your executive teams,” says Mike Walker, research vice president at Gartner.

Trend #1: Democratized AI

AI, one of the most disruptive classes of technologies, will become more widely available due to cloud computing, open source and the “maker” community. While early adopters will benefit from continued evolution of the technology, the notable change will be its availability to the masses. These technologies also foster a maker community of developers, data scientists and AI architects, and inspire them to create new and compelling solutions based on AI.

For example, smart robots capable of working alongside humans, delivering room service or working in warehouses, will allow organizations to assist, replace or redeploy human workers to more value-adding tasks. Also in this category are autonomous driving Level 4 and autonomous driving Level 5, which replaced “autonomous vehicles” on this year’s Hype Cycle.

Autonomous driving Level 4 describes vehicles that can operate without human interaction in most, but not all, conditions and locations and will likely operate in geofenced areas. This level of autonomous car will likely appear on the market in the next decade. Autonomous driving Level 5 labels vehicles operating autonomously in all situations and conditions, and controlling all tasks. Without a steering wheel, brakes or pedals, these cars could become another living space for families, having far reaching societal impacts.

Trend #2: Digitalized ecosystems

Emerging technologies in general will require support from new technical foundations and more dynamic ecosystems. These ecosystems will need new business strategies and a move to platform-based business models.

“The shift from compartmentalized technical infrastructure to ecosystem-enabling platforms is laying the foundation for entirely new business models that are forming the bridge between humans and technology,” says Walker.

For example, blockchain could be a game changer for data security leaders, as it has the potential to increase resilience, reliability, transparency, and trust in centralized systems. Also under this trend are digital twins, a virtual representation of a real object. This is beginning to gain adoption in maintenance, and Gartner estimates hundreds of millions of things will have digital twins within five years.

Trend #3: Do-it-yourself biohacking

2018 is just the beginning of a “trans-human” age where hacking biology and “extending” humans will increase in popularity and availability. This will range from simple diagnostics to neural implants and be subject to legal and societal questions about ethics and humanity. These biohacks will fall into four categories: technology augmentation, nutrigenomics, experimental biology and grinder biohacking.

For example, biochips hold the possibility of detecting diseases from cancer to smallpox before the patient even develops symptoms. These chips are made from an array of molecular sensors on the chip surface that can analyze biological elements and chemicals. Also new to the Hype Cycle this year is biotech, artificially cultured and biologically inspired muscles. Though still in lab development, this technology could eventually allow skin and tissue to grow over a robot exterior, making it sensitive to pressure.

Trend #4: Transparently immersive experiences

Technology, such as that seen in smart workspaces, is increasingly human-centric, blurring the lines between people, businesses and things, and extending and enabling a smarter living, work and life experience. In a smart workspace, electronic whiteboards can better capture meeting notes, sensors will help deliver personalized information depending on employee location, and office supplies can interact directly with IT platforms.

On the home front, connected homes will interlink devices, sensors, tools and platforms that learn from how humans use their house. Increasingly intelligent systems allow for contextualized and personalized experiences.

Learn how to identify, respond and navigate through the world of digital disruption

Trend #5: Ubiquitous infrastructure

In general, infrastructure is no longer the key to strategic business goals. The appearance and growing popularity of cloud computing and the always-on, always-available, limitless infrastructure environment have changed the infrastructure landscape. These technologies will enable a new future of business.

For example, quantum computing, with its complicated systems of qubits and algorithms, can operate exponentially faster than conventional computers. In the future, this technology will have a huge impact on optimization, machine learning, encryption, analytics and image analysis. Though general-purpose quantum computers will probably never be realized, the technology holds great potential in narrow, defined areas.

A second new technology in this trend is neuromorphic hardware. These are semiconductor devices inspired by neurobiological architecture, which can deliver extreme performance for things like deep neural networks, using less power and offering faster performance than conventional options.

Gartner clients can read the full report in Gartner Hype Cycle for Emerging Technology, 2018 by Mike Walker. This research is part of the Gartner Trend Insight Report “2018 Hype Cycles, Riding the Innovation Wave.” With profiles of technologies, services and disciplines spanning over 100 Hype Cycles, this Trend Insight Report is designed to help CIOs and IT leaders respond to the opportunities and threats affecting their businesses, take the lead in technology-enabled business innovations and help their organizations define an effective digital business strategy.

by simon | Aug 21, 2018 | Digital Insights, Uncategorised

The component parts of a successful search engine optimization (SEO) strategy may have remained relatively constant, but their definition and purpose have changed entirely. Driven by trends like visual search and voice search, the industry’s scope has expanded and evolved into something more dynamic.

This delivers on a genuine consumer need. According to a report from Slyce.it, 74 percent of shoppers report that text-only search is insufficient for finding the products they want.

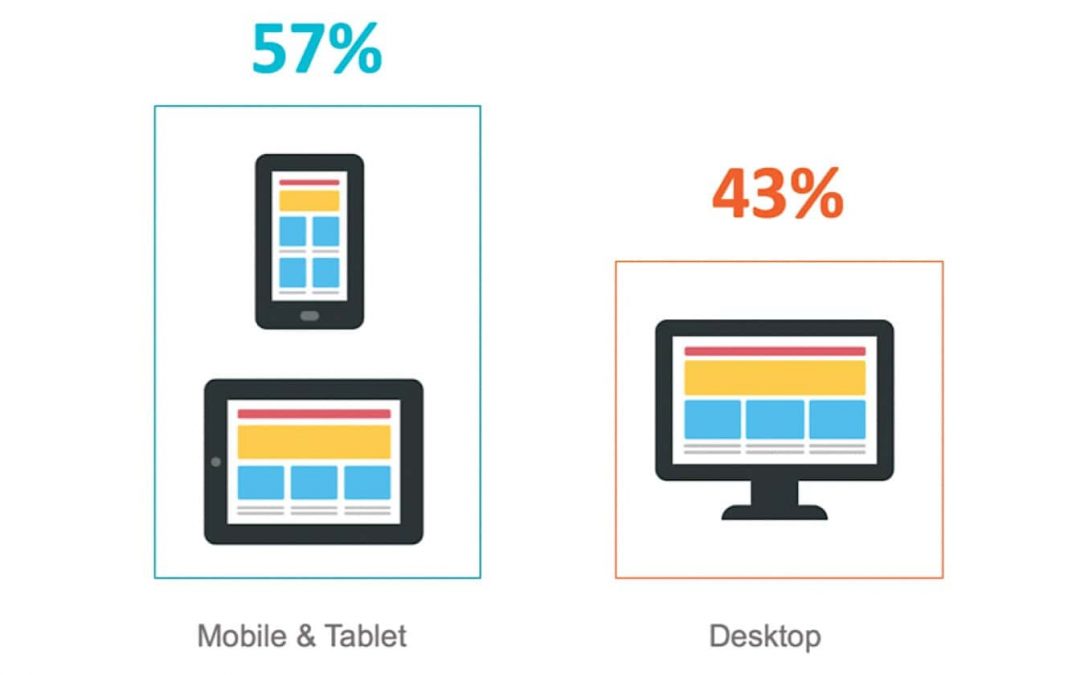

It is unsurprising that Gartner research predicts that by 2021, early adopter brands that redesign their websites to support visual and voice search will increase digital commerce revenue by as much as 30 percent. Through visual and voice search, marketers can engage more meaningfully with their audience at each stage of their purchase journey. This means moving beyond the static websites of old toward more interactive experiences that can be accessed anywhere, any time, on any device.

Sensory search

Search visibility still matters, but the concept of “rankings” is hard to pin down when we factor in the proliferation of the Internet of Things (IoT) devices and the machine learning algorithms that fine-tune the search results.

Brands’ content must be relevant to a query, but those queries are getting more specific and contextual; relevance must be combined with usefulness at the moment.

Underpinning these shifts are two trends that are revitalizing the search industry: visual search and voice search. Though these are linked and can be grouped under the umbrella of “sensory search,” they are separate disciplines with different implications for search marketers.

For those that engage early by implementing technical best practices and adapting SEO strategies, they represent some of the foremost opportunities in digital marketing for the coming years.

Visual search

For many years, Google has provided the ability to upload an image or image URL to generate a search engine result page (SERP) in the search toolbar within Google Images.

The next generation of visual search turns a smartphone camera into a visual discovery tool. It can use an image as a search query, which allows consumers to search for styles and objects that they would otherwise struggle to define. The most popular visual search technologies are Google Lens and Pinterest Lens, but Amazon, Bing and a growing list of major retailers are all investing heavily in this area. Visual search is also a building block for augmented reality and virtual reality interactions.

There is a growing swell of evidence to substantiate the claims this technology is taking off with consumers, too:

According to Grand View Research:

The global image recognition market size was valued at USD 16.0 billion in 2016 and is likely to expand at a compound annual growth rate of 19.2 percent from 2017 to 2025.

This is still a sizeable opportunity for retailers, too, as only 32 percent are either already using artificial intelligence (AI) for visual search or plan to do so within the next year:

Visual search optimization tips

Here are some tips to help you optimize for visual search:

- Add multiple images to each product or topic page.

- Optimize the images for the web and swift page load.

- Consider adding raster images and add message and call to action (CTA) in the photo so it is more compelling when viewed in Google Images or repurposed.

- Upload image eXtensible markup language (XML) sitemaps and ensure that product inventory is updated across all search engines and retailers.

- Maintain a logical site hierarchy that is connected through relevant internal links.

- Make sure your images are hosted on authoritative pages that respond to a specific user intent.

- Map keyword categories and themes to your images, and then use these queries to optimize image alt tags, titles and captions. Put relevant keywords in the image file name.

- Develop a unique brand aesthetic across all visual assets. This will help search engines relate your brand to a particular style.

- If you use a stock image, tailor them to ensure they are not identical to the hundreds of other instances of that exact image. Search engines will find it difficult to understand your image if it is replicated across the web in different contexts.

- Although visual search reporting is still very limited, keep a close eye on your image search traffic to keep track of any increases in demand.

Voice search

Voice search has had much more publicity than its visual counterpart, fronted by glitzy demonstrations from the likes of Apple, Google and Amazon. In the “age of assistance,” it seems voice will be the preferred mode of access to AI-driven devices. Undoubtedly, some impressive statistics substantiate this claim:

Sixty-five percent of people who own an Amazon Echo or Google Home can’t imagine going back to the days before they had a smart speaker.

Voice commerce sales reached $1.8 billion in the US last year and are predicted to reach $40 billion by 2022.

Fifty-two percent of voice-activated speaker owners would like to receive information about deals, sales and promotions from brands.

These are still experimental times for voice search, and many brands are trying to ascertain just how much it will affect their industry. As with visual search, reporting is limited at the moment, but there are still plenty of opportunities for innovation. Brands need to think about how they want to sound, rather than just look. Voice search naturally opens up conversations, and it is certainly possible to foresee a future where digital assistants relay messages directly from brands, rather than just reading the text.

A step in this direction is the launch of the Speakable structured data format, now available in beta via Schema.org. Although it’s only available for news at the moment, it will surely open up to other industries after this test period.

Voice search optimization tips

Google’s guidelines point out some important points for any brand that wishes to optimize for voice search:

- Content indicated by speakable structured data should have concise headlines and/or summaries that provide users with comprehensible and useful information.

- If you include the top of the story in speakable structured data, we suggest that you rewrite the top of the story to break up information into individual sentences so that it reads more clearly for text to speech (TTS).

- For optimal audio user experiences, we recommend around 20 to 30 seconds of content per section of speakable structured data, or roughly two to three sentences.

The concept of a ‘“brand voice” looks set to take on a very literal dimension as voice search evolves into something more conversational.

Technical SEO for visual and voice search

If brands can’t predict the variety and volume of demand with precision, they must ensure they are in prime position to attract qualified traffic.

As we move into an era of ambient search, with consumers looking for instant information on the go, it is imperative that content can be served quickly and seamlessly. One technical consideration is that a higher quantity of pre-rendered content needs to be served to the user and to search engines. This is more important than in the past, when a significant amount of processing could occur within the browser.

However, to respond to (and even pre-empt) user queries via voice or image, pre-rendered content should be delivered to search engine user agents. Structured data is often mentioned in relation to visual and voice search, with good reason. The premise of semantic search, which is an essential development for visual and voice search, is built on the idea of entities and structure. By understanding entities and how they are interconnected, a search engine can infer context and intent from search queries.

For visual search, Google’s Clay Bavor summarized the size of the challenge:

In the English language, there’s something like 180,000 words, and we only use 3,000 to 5,000 of them. If you’re trying to do voice recognition, there’s a really small set of things you actually need to be able to recognize. Think about how many objects there are in the world, distinct objects, billions, and they all come in different shapes and sizes.

Brands need to help Googlebot by structuring and labeling their own data so that it can be served instantly for relevant queries.

There are some vital structured data elements that brands should focus on for visual and voice search (if applicable):

- Price.

- Availability.

- Product name.

- Image.

- Logos.

- Social profiles.

- Breadcrumb navigation.

Summary

Visual and voice search are taking hold for a host of intertwined reasons, both psychological and technological. They allow users to find new ideas in more effective and efficient formats. They also intersect with numerous technological trends, including digital assistants, artificial intelligence, and vertical search.

In the case of vertical search, the discovery of content within specific verticals is a natural fit for targeted information retrieval.

One of the prime benefits of both visual and voice search is that they simply create a platform for more effective communication with consumers. As the role of search expands to cover every step on the path to purchase, the number of search-based micro-moments will continue to proliferate. To capitalize, brands need a deep understanding of their consumers, a multimedia content strategy that caters to their audience’s requirements and the technical knowledge to communicate these messages to search engines through text, voice and images.

The future of search lies with voice, visual and vertical optimization. While that may sound disconcertingly nebulous, savvy marketers are defining what this new order means to them and acting to implement their strategies today.

Opinions expressed in this article are those of the guest authors. Staff authors are listed here.

by simon | Sep 12, 2017 | Digital Insights

Google has been spotted testing infinite scroll search results pages in mobile search.

For some searches, rather than showing the usual “Next” button at the bottom of search results, there is a “See more results” button.

Clicking on “See more results” loads more results right on the page the user is currently viewing, instead of taking them to a new page.

Google says it regularly conducts numerous tests on a daily basis, so we can only assume infinite scroll mobile search results is nothing more than a test at this time.

We certainly cannot confirm this is something that will be rolled out permanently, but it is interesting nonetheless.

We’d like to thank Charity at Conductor for sending us this information. https://www.searchenginejournal.com/google-testing-infinite-scroll-mobile-search/212896/

by simon | Sep 12, 2017 | Digital Insights

Searchers will soon start seeing videos on Google Maps listings, as the company is giving Local Guides the ability to

upload video with an Android device.

Videos on Google Maps can be viewed by users on iOS, Android, or desktop – but for the time being they can only be uploaded on Android.

Google offers a few suggestions for using this new feature:

”The possibilities are really exciting: Take viewers on a mini tour of a store you love, or show the bustling scene at your favorite neighborhood restaurant.”

Video reviews showcasing your favorite items are also an option, as well as videos offering general tips for new visitors.

This feature is rolling out gradually to Local Guides on Android. Local Guides are individuals who are committed to contributing to Google maps.

Local Guides earn points for contributing photos, reviews, and information to Maps listings. As they earn points they can level up, which grants access to perks.

If you’re interested in joining the program you can sign up here. Local Guides can start adding video to Google Maps by following these steps:

- Search and select a place on Google Maps

- Scroll down and tap “Add a photo”

- Tap “Camera” and hold the shutter button for up to 10 seconds

Local Guides can also add videos that are already on their device by selecting “Folder” instead of “Camera.” Only the first 30 seconds of a video can be added.

https://www.searchenginejournal.com/google-introduces-video-google-maps-listings/212418/